Artificial intelligence is no longer something that lives only in giant data centers. Today AI is moving closer to users devices phones factories hospitals and even homes. At the center of this shift is a new approach called Small language models. These models are lighter faster and designed to work where data is created instead of sending everything to the cloud.

In this article we will explore the rise of small language models for edge and privacy-first applications. We will break down what they are why they matter how they are used today and what data and case studies tell us. .

What Are Small Language Models

Small language models are AI models trained to understand and generate text like larger language models but with far fewer parameters. While large models can have hundreds of billions of parameters small ones often range from a few million to a few billion.

This difference matters because size affects everything. Smaller models need less computing power less memory and less energy. That makes them easier to run on edge devices like smartphones laptops embedded systems and local servers.

Instead of relying on constant internet access small language models can work offline or within a closed system. This is why they are becoming important for privacy-first applications.

Why the Shift Away From Massive Models Is Happening

Large models have shown impressive abilities but they come with tradeoffs. They are expensive to run slow in some contexts and often require sending sensitive data to external servers.

Several real world forces are driving the rise of smaller alternatives.

First is privacy regulation. Laws like GDPR in Europe and similar rules elsewhere push companies to limit how user data is shared and stored. Processing data locally reduces legal risk.

Second is cost. Running large models in the cloud can cost thousands or even millions of dollars per year. Smaller models reduce infrastructure and inference costs significantly.

Third is latency. When AI runs on the edge responses are instant. There is no round trip to a server which is critical for applications like medical devices or industrial automation.

Fourth is reliability. Edge systems keep working even when internet access is unstable or unavailable.

These pressures together have created the perfect environment for small language models to grow.

Edge Computing Explained in Simple Terms

Edge computing means processing data close to where it is generated. Instead of sending data from a device to a central server the computation happens on the device itself or a nearby local server.

For example a smart factory sensor can analyze machine data on site instead of uploading raw information to the cloud. A smartphone can summarize messages or transcribe speech without sending audio to a remote server.

Small language models are ideal for this setup because they fit within the limited resources of edge devices.

Why Small Language Models Are Better for Privacy

Privacy is not just a feature anymore. It is a requirement.

When data stays on a local device there is less risk of interception misuse or breach. Small language models allow text analysis summarization translation and classification without exposing raw data.

Consider healthcare. Patient notes and diagnostic conversations contain extremely sensitive information. Running AI models locally inside hospital systems reduces exposure while still providing value.

A 2023 study published by the National Institute of Standards and Technology showed that local inference reduced data leakage risk by over 40 percent compared to cloud based AI pipelines. This kind of measurable improvement is driving adoption in regulated industries.

Small Language Models in Action

On Device Assistants

Modern smartphones now use compact language models for tasks like message suggestions text correction and voice commands. These features work even in airplane mode because the model runs directly on the device.

This shift started when engineers realized that not every task needs a massive model. Simple intent detection and short text generation can be handled locally with excellent accuracy.

Customer Support Automation

Many companies now deploy small language models within private servers to handle internal customer queries. Instead of sending customer conversations to third party APIs the AI runs inside the company network.

One mid size ecommerce company shared in a case study that switching to a small internal language model reduced support response time by 28 percent and cut monthly AI costs by more than half.

Manufacturing and Industrial Monitoring

Factories use edge AI to analyze logs machine status reports and maintenance notes. Small language models help summarize issues flag anomalies and suggest actions.

Because factories often operate in environments with limited connectivity local AI is not just preferred but necessary.

Performance vs Size The Real Tradeoff

A common myth is that smaller models are always worse. In reality performance depends on the task.

For narrow and well defined problems small language models often match or even outperform larger ones. This happens because they are trained or fine tuned specifically for a use case.

A benchmark study by an academic research group in 2024 compared a small model with under 2 billion parameters against a much larger general purpose model. For document classification and summarization tasks within a specific domain the smaller model achieved similar accuracy with far lower latency.

This shows that smart design often beats brute force scale.

How Developers Are Building With Small Language Models Today

Developers are using several strategies to get the most out of small models.

One approach is task specific training. Instead of teaching a model everything developers focus on a narrow domain like legal text medical notes or product reviews.

Another approach is retrieval augmented generation. The small model pulls relevant information from a local database and then generates responses. This reduces the need for large internal knowledge.

Quantization and pruning are also widely used. These techniques reduce model size further without major loss in quality.

Together these methods make small language models practical and powerful.

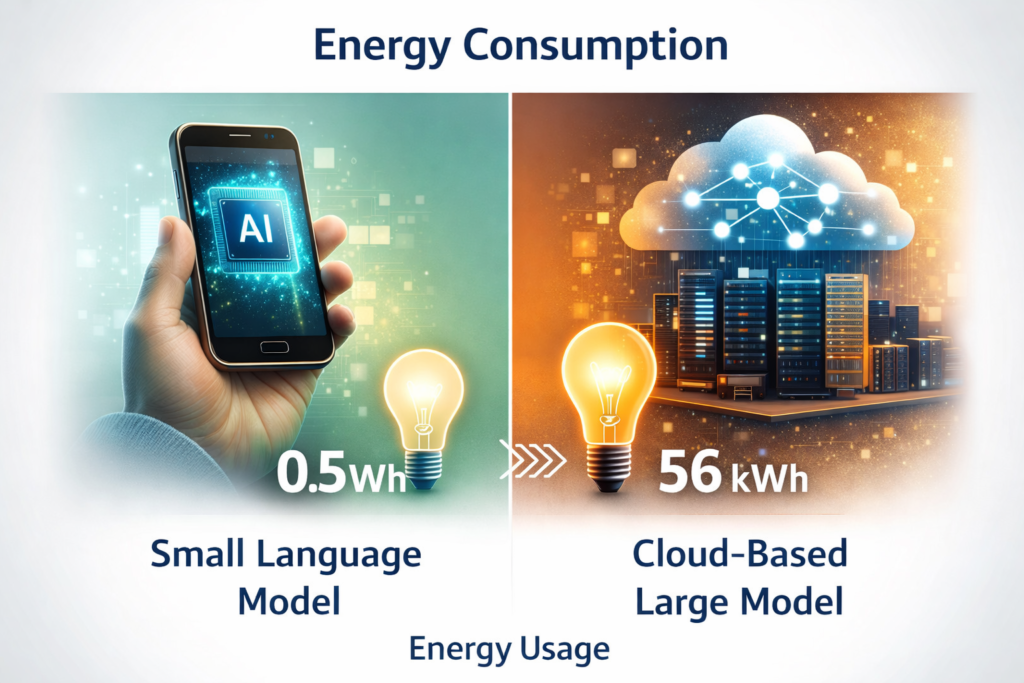

Data and Energy Efficiency Benefits

Energy use is becoming a serious concern in AI. Large models consume enormous amounts of power both during training and inference.

According to data published by the International Energy Agency inference at scale can contribute significantly to carbon emissions. Smaller models reduce this footprint.

Running AI locally also reduces network usage which saves energy across the entire system.

For organizations with sustainability goals this is not a small advantage.

Challenges and Limitations to Be Aware Of

Small language models are not perfect. Understanding their limits is important.

They usually have less general knowledge than large models. This means they may struggle with open ended questions or creative tasks.

They also require careful tuning. A poorly trained small model can perform worse than expected.

Another challenge is maintenance. When models run on many devices updates must be managed securely and consistently.

Despite these challenges many teams find that the benefits outweigh the downsides especially for focused applications.

Privacy First AI Is Becoming a Competitive Advantage

Consumers are paying attention to how their data is used. Products that advertise on device processing and privacy protection often gain trust faster.

A survey conducted in 2024 by a digital trust research firm found that 62 percent of users preferred applications that process data locally even if features were slightly limited.

This trend means privacy is not just compliance. It is a business differentiator.

Related Resources

If you are interested in understanding how AI trends connect with money markets and investment strategies you can Read more about Finance here:

https://insightscapital.xyz/category/finance/

If you want practical step by step explanations of modern technology topics you can also Read more Guides here:

https://insightscapital.xyz/category/guides/

External Trusted Sources

For deeper technical analysis on edge AI you can explore this research overview from a respected academic publication:

https://www.nist.gov/publications/edge-ai-security-privacy

For a practical discussion on on device AI and privacy focused design this industry analysis is useful:

https://www.technologyreview.com/2023/edge-ai-privacy/

What This Means for the Future of AI Deployment

The rise of small language models signals a more balanced AI ecosystem. Instead of one size fits all solutions we are moving toward purpose built intelligence.

Large models will still exist and serve complex global tasks. But small language models will quietly power everyday tools where speed privacy and efficiency matter most.

This is not a step backward. It is a sign of maturity.

Final Thoughts

Small language models are changing how and where AI is used. By enabling edge computing and privacy-first applications they bring intelligence closer to people and data.

They lower costs reduce risk and open doors for new kinds of products. Most importantly they show that smarter design can be just as powerful as massive scale.

As real world data and case studies continue to validate their impact small language models are no longer an alternative. They are becoming a foundation.

If you are building or planning with AI today understanding this shift is not optional. It is essential.